Online first

About the Journal

Current issue

Archive

Publication Ethics

Anti-Plagiarism system

Instructions for Authors

Instructions for Reviewers

Editorial Office

Editorial Board

Contact

Reviewers

All Reviewers

2025

2024

2023

2022

2021

2020

2019

2018

2017

2016

General Data Protection Regulation (RODO)

REVIEW PAPER

Irreproducibility –The deadly sin of preclinical research in drug development

1

International Institute of Biotechnology and Toxicology (IIBAT), Padappai, India

2

Ex-Cabinet Secretary Researcher, Food Safety Commission of Japan, Akasaka, Japan

3

Trichinopoly.i, Tiruchirappalli, India

4

K.K. College of Pharmacy, Chennai, India

Corresponding author

Sadasivan Kalathil Pillai

International Institute of Biotechnology and Toxicology (IIBAT), Padappai, Kancheepuram Dist, India

International Institute of Biotechnology and Toxicology (IIBAT), Padappai, Kancheepuram Dist, India

J Pre Clin Clin Res. 2020;14(4):165-168

KEYWORDS

TOPICS

ABSTRACT

Introduction:

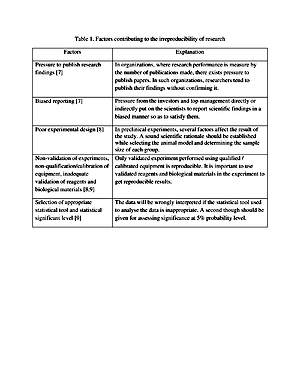

In recent years the irreproducibility of preclinical studies has become a serious concern in drug developmental research. The findings of preclinical studies that cannot be reproduced are a drain on public resources and slow down the drug discovery process. Among the various factors that contribute to irreproducibility in preclinical drug developmental research, poor statistical analysis and weak experimental design play a major role in the failure of drugs in clinical research. Conclusion. Poor experimental design and lack of knowledge or limited knowledge of statistical analysis of data contribute significantly to the irreproducibility of preclinical research. A well-designed experiment with proper statistical analysis of data conducted by committed researchers rarely fails to reproduce.

Objective:

The aim of this review is to describe key factors, such as poor statistical analysis and weak experimental design, that contribute to the irreproducibility of preclinical studies in drug development, and how such studies slow down the drug development process.

Brief description of the state of knowledge:

Theirreproducibility of preclinical research is a serious issue that researchers, especially those who are involved in drug discovery, are facing today. The irreproducibility of research drains public resources, time, and diminish the trust of the common man in the research community. The factors that contribute to the irreproducibility of preclinical research are related to experiment design and improper statistical analysis of the experimental data. Most of these factors can be eliminated by researchers developing a commitment to science and society.

Conclusions:

Poor experimental design and lack of knowledge or limited knowledge of statistical analysis of data contribute significantly to the irreproducibility of preclinical research. A well-designed experiment with proper statistical analysis of data conducted by committed researchers rarely fails to reproduce.

In recent years the irreproducibility of preclinical studies has become a serious concern in drug developmental research. The findings of preclinical studies that cannot be reproduced are a drain on public resources and slow down the drug discovery process. Among the various factors that contribute to irreproducibility in preclinical drug developmental research, poor statistical analysis and weak experimental design play a major role in the failure of drugs in clinical research. Conclusion. Poor experimental design and lack of knowledge or limited knowledge of statistical analysis of data contribute significantly to the irreproducibility of preclinical research. A well-designed experiment with proper statistical analysis of data conducted by committed researchers rarely fails to reproduce.

Objective:

The aim of this review is to describe key factors, such as poor statistical analysis and weak experimental design, that contribute to the irreproducibility of preclinical studies in drug development, and how such studies slow down the drug development process.

Brief description of the state of knowledge:

Theirreproducibility of preclinical research is a serious issue that researchers, especially those who are involved in drug discovery, are facing today. The irreproducibility of research drains public resources, time, and diminish the trust of the common man in the research community. The factors that contribute to the irreproducibility of preclinical research are related to experiment design and improper statistical analysis of the experimental data. Most of these factors can be eliminated by researchers developing a commitment to science and society.

Conclusions:

Poor experimental design and lack of knowledge or limited knowledge of statistical analysis of data contribute significantly to the irreproducibility of preclinical research. A well-designed experiment with proper statistical analysis of data conducted by committed researchers rarely fails to reproduce.

Sadasivan Kalathil Pillai, Katsumi Kobayashi, Mathews Michael, Meena A. Irreproducibility – The deadly sin of preclinical research in drug development. J Pre-Clin Clin Res. 2020; 14(4): 165–168. doi: 10.26444/jpccr/131017

REFERENCES (50)

1.

Collins FS, Tabak LA. Policy: NIH plans to enhance reproducibility. Nature. 2014; 505: 612–613. https://doi.org/10.1038/505612....

2.

Begley C, Ellis L. Raise standards for preclinical cancer research. Nature. 2012; 483: 531–533. https://doi.org/10.1038/483531....

3.

Baker M. 1,500 Scientists lift the lid on reproducibility. Nature. 2016; 533: 452–45.https://doi:10.1038/533452a4.

4.

Goodman SN, Fanelli D, Loannidis JPA. What does research reproducibility mean? Sci Transl Med. 2016; 8: 1–6. https://doi:10.1126/scitranslm....

5.

Freedman LP, Cockburn IM, Simcoe TS. The economics of reproducibility in preclinical research. PLoS Biol. 2015; 13: e100216.

6.

Johnson VE. Revised standards for statistical evidence. Proc Nat Acad Sci. 2013; 110 (48): 19313–19317. https://doi.org/10.1073/pnas.1....

7.

Boulbes DR, Costello TJ, Baggerly KA, Fan F, Wang R, Bhattacharya R, et al. A survey on data reproducibility and the effect of publication process on the ethical reporting of laboratory research. Clin Cancer Res. 2018; 24: 3447–3455. https://doi.org/10.1158/1078-0....

8.

Daniel C. Poorly designed animal experiments in the spotlight. Nature. 2015; doi: 10.1038/nature.2015.18559.

9.

Freedman LP, Venugopalan G, Wisman R. Reproducibility 2020: Progress and priorities. F1000Res. 2017; 6: 604. https://doi.org/10.12688/f1000....

10.

Loannidis JP. Why most published research findings are false. PLoS Med. 2005; 8: e124. https://doi.org/10.1371/journa....

11.

Weaver J. Animal studies paint misleading picture. Nature. 2010; doi.org/10.1038/news.2010.158.

12.

Cohen D. Oxford TB vaccine study calls into question selective use of animal data. BMJ. 2018; 360: j5845. https://doi.org/10.1136/bmj.j5....

13.

Bath PM, Gray LJ, Bath AJ, Buchan A, Miyata T, Green AR. Effects of NXY-059 in experimental stroke: an individual animal meta-analysis. Br J Pharmacol. 2009; 157: 1157–1171. https://doi.org/10.1111/j.1476....

14.

Savitz SI. A critical appraisal of the NXY-059 neuroprotection studies for acute stroke: A need for more rigorous testing of neuroprotective agents in animal models of stroke. Exper Neurol. 2007; 205: 201–205. https://doi.org/10.1016/j.expn....

15.

Festing MFW, Altman DG. Guidelines for the Design and Statistical Analysis of Experiments Using Laboratory Animals. ILAR J. 2002; 43: 244–458. https://doi.org/10.1093/ilar.4....

16.

Bespalov A, WickeK, Castagné V. Blinding and Randomization. In: Bespalov A, Michel M, StecklerT, editors. Good research practice in non-clinical pharmacology and biomedicine. Handbook of Experimental Pharmacology. Springer, Cham, 2019. Vol. 257.

17.

Hess KR. Statistical design considerations in animal studies. Cancer Res. 2011; 71(2): 625. https://doi.org/10.1158/0008-5....

18.

Macleod M. Why animal research needs to improve. Nature. 2011; 477: 511. ht t ps://doi.org /10.1038/477511a.

19.

Bebarta V, Luyte D, Heard K. Emergency medicine research: Does use of randomization and blinding affect the results? Acad Emerg Med. 2003; 10: 684–687. https://doi.org/ 10.1111/j.1553-2712.2003.tb00056.x.

20.

Macleod MR, van der Worp HB, Sena ES, Howells DW, Dirnagl U, Donnan GA. Evidence for the efficacy of NXY-059 in experimental focal cerebral ischaemia is confounded by study quality. Stroke. 2008; 39: 2824–2829. https://doi.org/10.1161/STROKE....

21.

Charan J, Kantharia ND. How to Calculate Sample Size in Animal Studies? J Pharmacol Pharmacother. 2013; 4: 303–306. https://doi.org/10.4103/0976-5....

22.

Kobayashi K, Pillai KS. A handbook of applied statistics in pharmacology. New York: CRC Press; 2013.

23.

Pillai KS. Statistical analysis in non-clinical GLP studies. In: Mohanan PV, editor. Good laboratory practice and regulatory issue. Bombay: Education Book Centre; 2006.

24.

Pillai KS. Statistical methods in regulatory toxicology. In: Sengupta R. editor. Regulatory toxicology – essentially practical aspects. Delhi: Narosa Publishing House Pvt Ltd; 2016.

25.

Kobayashi K, Pillai KS, Sakuratani Y, SuzukiM,Jie W. Do we need to examine the quantitative data obtained from toxicity studies for both normality and homogeneity of variance? J Environ Biol. 2008; 29: 47–52.

26.

Kobayashi K, Pillai KS, Guhatakurta S, Cherian KM. Statistical tools for analysing the data obtained from repeated dose toxicity studies with rodents: A comparison of the statistical tools used in Japan with that of used in other countries. J Environ Biol. 2011; 32: 11–16.

27.

Kobayashi K, Pillai KS, Michael M, Cherian KM, Ohnishi M. Determining NOEL/NOAEL in repeat-dose toxicity studies, when thelow dose group shows significant difference in quantitative data. Lab Anim Res. 2010; 26: 133–137. https://doi.org/10.5625/lar.20....

28.

Altman DG. Practical statistics for medical research. 1st ed. London: Chapman and Hall; 1991.

29.

Kobayashi K, Pillai KS. Applied statistics in toxicology and pharmacology, Science Publishers Inc., USA; 2003.

30.

OECD Guidance document 116 on conduct and design of chronic toxicity and carcinogenicity studies, Supporting test guidelines 451, 452 and 453- 2nd ed. Paris; 2012. p. 114–143.

31.

Nuzzo R. Scientific Method: Statistical Errors. Nature. 2014; 506: 150–152. https://doi.org/ 10.1038/506150a.

32.

Goodman S. A dirty dozen: Twelve p-value misconceptions. Semin Hematol. 2008; 45: 135–140. https://doi.org/10.1053/j.semi....

33.

Hubbard R, Haig BD, Parsa RA. The limited role of formal statistical inference in scientific inference. Am Stat. 2019; 73: 91–98. https://doi.org/10.1080/000313....

34.

Fisher RA. The arrangement of field experiments. J Ministry Agri Great Britain. 1926; 33: 503–513.

35.

McShane BB, Gal D, Gelman A, Robert C, Tackett JL. Abandon statistical significance. Am Stat. 2019; 73: 235–245. https://doi.org/10.1080/000313....

36.

Bem DJ. Feeling the future: Experimental evidence for anomalous retroactive influences on cognition and affect. J PersSoc Psychol. 2011; 100: 407–425. https://doi.org/10.1037/a00215....

37.

Gelman A, Stern H. The difference between “significant” and “not significant” is not itself statistically significant. Am Stat. 2006; 60: 328–331. https://doi.org/10.1198/000313....

38.

Baker M. Statisticians issue warning over misuse of p values. Nature. 2016; 531: 151. https://doi.org/10.1038/nature....

39.

Trafimow D, Marks M. Editorial. Basic Appl Social Psych. 2015; 37: 1, 1–2. https://doi.org/10.1080/019735....

40.

Head ML, Holman L, Lanfear R, KahnAT, Jennions MD. The extent and consequences of p-hacking in Science. PLoS Biol. 2015; 13, e1002106. https://doi.org/10.1371/journa....

41.

Price R, Bethune R, Massey L. Problem with p values: Why p values do not tell you if your treatment is likely to work. Postgrad Med J. 2020; 96: 1–3. doi: 10.1136/postgradmedj-2019-137079.

42.

Sterne JA, Smith DG. Sifting the evidence-What’s wrong with significance tests? BMJ. 2001; 322: 226–231. https://doi.org/10.1136/bmj.32....

43.

WasserestinRL, Lazar NA. The ASA’s statement on p-values: Context, process, and purpose. Am Stat. 2016; 70: 129–133. https://doi.org/10.1080/000313....

44.

Greenland S. Valid p-values behave exactly as they should: Some misleading criticisms of p-values and their resolution with S-values. Am Stat. 2019; 73: 106–114. https://doi.org/10.1080/000313....

45.

Kruschke JK. What to believe: Bayesian methods for data analysis. Trends Cogn Sci. 2010; 14: 293–300. https://doi.org/ 10.1016/j.tics.2010.05.001.

46.

Chander NG. Beyond statistical significance. J Indian Prosthodont Soc. 2019; 19: 201–202.

47.

Benjamin DJ, Berger JO, Johannesson M, Nosek BA, Wagenmakers, EJ, Berk R, et al. Redefine statistical significance. Nat Hum Behav. 2018; 2: 6 –10. https://doi.org/10.1038/s41562....

48.

Demidenko E. The p-value you can’t buy. Am Stat. 2016; 70: 33–38. https://doi.org/10.1080/000313....

49.

Ranstam J. Why the p-value culture is bad and confidence intervals a better alternative. Osteoarthr Cartil. 2012; 20: 805–808. https://doi.org/10.1016/j.joca....

50.

Redmond AC, Keenan A. Understanding statistics – Putting p-values into perspective. J Am Podiatric Med Assoc. 2002; 92: 297–305. https://doi.org/10.7547/875073....

We process personal data collected when visiting the website. The function of obtaining information about users and their behavior is carried out by voluntarily entered information in forms and saving cookies in end devices. Data, including cookies, are used to provide services, improve the user experience and to analyze the traffic in accordance with the Privacy policy. Data are also collected and processed by Google Analytics tool (more).

You can change cookies settings in your browser. Restricted use of cookies in the browser configuration may affect some functionalities of the website.

You can change cookies settings in your browser. Restricted use of cookies in the browser configuration may affect some functionalities of the website.